Immer performance

egghead.io lesson 5: Leveraging Immer's structural sharing in React

egghead.io lesson 7: Immer will try to re-cycle data if there was no semantic change

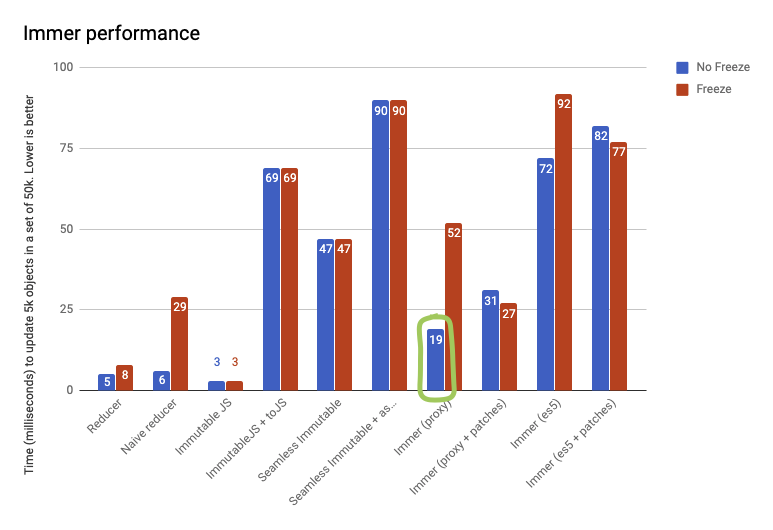

Here is a simple benchmark on the performance of Immer. This test takes 50,000 todo items and updates 5,000 of them. Freeze indicates that the state tree has been frozen after producing it. This is a development best practice, as it prevents developers from accidentally modifying the state tree.

Something that isn't reflected in the numbers above, but in reality, Immer is sometimes significantly faster than a hand written reducer. The reason for that is that Immer will detect "no-op" state changes, and return the original state if nothing actually changed, which can avoid a lot of re-renderings for example. Cases are known where simply applying immer solved critical performance issues.

These tests were executed on Node 10.16.3. Use yarn test:perf to reproduce them locally.

Most important observation:

- Immer with proxies is roughly speaking twice to three times slower as a handwritten reducer (the above test case is worst case, see

yarn test:perffor more tests). This is in practice negligible. - Immer is roughly as fast as ImmutableJS. However, the immutableJS + toJS makes clear the cost that often needs to be paid later; converting the immutableJS objects back to plain objects, to be able to pass them to components, over the network etc... (And there is also the upfront cost of converting data received from e.g. the server to immutable JS)

- Generating patches doesn't significantly slow down immer

- The ES5 fallback implementation is roughly twice as slow as the proxy implementation, in some cases worse.

Performance tips

Pre-freeze data

When adding a large data set to the state tree in an Immer producer (for example data received from a JSON endpoint), it is worth to call freeze(json) on the root of the data that is being added first. To shallowly freeze it. This will allow Immer to add the new data to the tree faster, as it will avoid the need to recursively scan and freeze the new data.

You can always opt-out

Realize that immer is opt-in everywhere, so it is perfectly fine to manually write super performance critical reducers, and use immer for all the normal ones. Even from within a producer you opt-out from Immer for certain parts of your logic by using utilies original or current and perform some of your operations on plain JavaScript objects.

For expensive search operations, read from the original state, not the draft

Immer will convert anything you read in a draft recursively into a draft as well. If you have expensive side effect free operations on a draft that involves a lot of reading, for example finding an index using find(Index) in a very large array, you can speed this up by first doing the search, and only call the produce function once you know the index. Thereby preventing Immer to turn everything that was searched for in a draft. Or, alternatively, perform the search on the original value of a draft, by using original(someDraft), which boils to the same thing.

Pull produce as far up as possible

Always try to pull produce 'up', for example for (let x of y) produce(base, d => d.push(x)) is exponentially slower than produce(base, d => { for (let x of y) d.push(x)})